NASA announced that it will launch the Nancy Grace Roman space telescope into orbit in September 2026, eight months ahead of schedule. The new space telescope is expected to deliver 20,000 terabytes of data to astronomers over the course of its life.

That will add to 57 gigabytes of breath-taking imagery downlinked daily from the James Webb Space Telescope, which began its work in 2021, and the start of a survey later this year by the Vera C. Rubin Observatory in the mountains of Chile, which is expected to gather 20 terabytes of data each night.

For comparison, the Hubble Space telescope, once the gold standard, delivers just 1 to 2 gigabytes of sensor readings each day. It’s been a while since all those readings were pored over by hand, but like everyone else with a pile of data, astronomers are now turning to GPUs to solve their problems.

Brant Robertson, a UC Santa Cruz astrophysicist, has had a front-row seat to this step change in science while supporting or using data from these missions. Robertson has spent the past 15 years working with Nvidia to apply GPUs to the problems of understanding space, first through advanced simulations testing theories about supernnova explosions, and now developing the tools to analyze a torrent of data from the newest observatories.

“There’s been this evolution [from] looking at a few objects, to doing CPU-based analyses on large scales of the data set, to then doing GPU-accelerated versions of those same analyses,” he told TechCrunch.

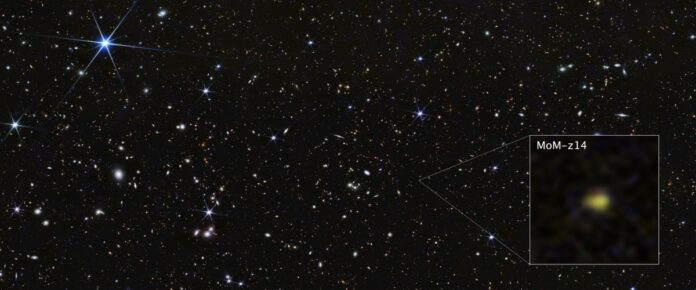

Robertson and then-graduate student Ryan Hausen developed a deep learning model called Morpheus that can pore over large data sets and identify galaxies. Their early AI analysis of Webb data identified a surprising number of a specific type of disc galaxies and added a new wrinkle to theories about the development of our universe.

Now Morpheus is changing with the times: Robertson is switching its architecture from convolutional neural networks to the transformers behind the rise of large language models. That will result in the model being able to analyze several times the area than it can currently, speeding up its work.

Techcrunch event

San Francisco, CA

|

October 13-15, 2026

Robertson is also working on generative AI models trained on space telescope data to improve the quality of observations collected by ground telescopes, which are distorted by Earth’s atmosphere. Despite advances in rocketry, it’s still hard to get an 8 meter mirror into orbit, so using software to improve Rubin’s observations is the next best thing.

But he’s still feeling the pressure of global demand for GPU access. Robertson has used the National Science Foundation to build a GPU cluster at UC Santa Cruz, but it is becoming outdated even as more researchers want to apply compute-intensive techniques to their work. The Trump administration proposed cutting the NSF’s budget by 50% in its current budget request.

“People want to do these AI, ML analyses, and GPUs are really the way to do that,” Robertson said. “You have to be entrepreneurial…especially when you’re working kind of at the edge of where the technology is. Universities are very risk averse because they just have constrained resources, so you have to go out and show them that, ‘look, this is where we’re going as a field.’”

When you purchase through links in our articles, we may earn a small commission. This doesn’t affect our editorial independence.

Disclaimer : This story is auto aggregated by a computer programme and has not been created or edited by DOWNTHENEWS. Publisher: techcrunch.com