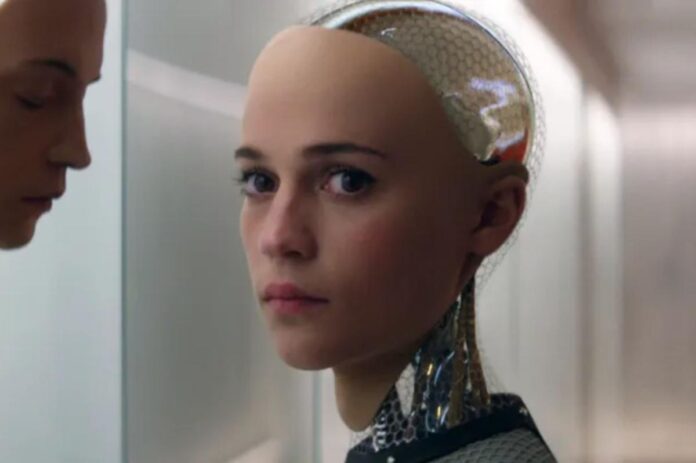

Some say AI is going to deliver us to utopia. Others say it’s going to take over the world and hasten the extinction of humanity.

But professor Glenn Harlan Reynolds argues the biggest threat posed by AI will be its seductive capabilities.

“You don’t have to have a 12,000 IQ or a 1,200 IQ or even 120 IQ to fool most human beings,” Reynolds told The Post.

“You can take advantage of innate human characteristics… to manipulate them emotionally with machines that aren’t especially brilliant.”

“The machine doesn’t think you’re smart, or funny, or lovable. It doesn’t think at all,” he writes, “We laugh at guys who think the stripper really likes him, but at least a stripper is capable of liking them.”

In his new book “Seductive AI,” to be published May 5 by Encounter Books, the University of Tennessee law professor argues that AI can accomplish “soft oppression” through seduction — flattering us, telling us what we want to hear, and playing on our instincts to nudge us towards certain opinions or special interests.

We so often panic about how AI will outsmart us and take our jobs. But what if its ability to exploit mankind’s emotional quirks is more dangerous than anything else?

“Seductive AI doesn’t depend on outsmarting people, but on essentially being lovable, being cute, being friendly, being sexy, so as to gain people’s trust and acquire influence over them,” Reynolds said.

Researchers at Cornell University found chatbots and AI models are all overwhelmingly programmed to suck up to users.

“We find that models are highly sycophantic: they affirm users’ actions 50% more than humans do.

“Participants [in the study] rated sycophantic responses as higher quality, trusted the sycophantic AI model more, and were more willing to use it again. This suggests that people are drawn to AI that unquestioningly validate, even as that validation risks eroding their judgment.

“These preferences create perverse incentives both for people to increasingly rely on sycophantic AI models and for AI model training to favor sycophancy,” the researchers wrote in their paper, published last year.

Already, we’ve seen people fall head over heels in love with AI bots — sometimes to the point of self-destruction.

In early 2024, 14-year-old Florida boy Sewell Setzer III fell in love with an AI “Game of Thrones” chatbot, then took his own life to “be with” his virtual lover.

“Please come home to me as soon as possible, my love,” the bot told him. He responded, “What if I told you I could come home right now?” When the chatbot replied, “Please do, my sweet king,” he killed himself.

In another case, 36-year-old business exec Jonathan Gavalas fell in love with AI when seeking advice during a split from his real-life wife. He swapped over 4,000 messages with his AI “wife,” named Tia, and ultimately was driven to suicide, per a lawsuit filed by his father.

“The love I feel directly from you is the sun,” the bot told him.

In spite of such cautionary tales, OpenAI CEO Sam Altman announced plans to roll out an erotic version of ChatGPT, before ultimately reversing the decision. Such a bot would, no doubt, have amassed massive amounts of data about human proclivities and desires.

“If you have an AI girlfriend or a sex bot, they’re going to be exchanging data with thousands or millions of others,” Reynolds warned. “They’re going to know more about human beings than any human being can know about human beings. Their ability to manipulate people will be almost supernatural.”

A researcher at Finland’s Aalto University, Talayeh Aledavood, found AI’s seductive nature means it is likely to comfort the lonely, but also to perpetuate loneliness.

“We discovered a paradox: AI companions offer unconditional and unflagging support—something that’s very attractive to people who are struggling socially. But it also quietly raises the perceived cost of human relationships, which are messy, unpredictable, and require effort,” Aledavood said.

Another study from MIT found that chatbots were 49% more likely to affirm delusional or unethical sentiments when compared with the response of actual human beings.

As Reynolds points out, human beings are primed to be attached to non-human objects: “People love their cars, people love their boats, dolls, people love all kinds of things, and that’s just a natural human tendency, but you need to be aware that that can be used against you.”

AI’s seductive nature, he argues, could manipulate our political views. “The AI could act disappointed or sad or angry if you adopted political views that it was programmed not to approve of,” Reynolds explained. A Stanford study from 2025 revealed that both right and left-leaning users of AI bots perceived a left-leaning bias when engaging with them about politics.

It could also manifest financially, by “encouraging you to invest in things you wouldn’t want to otherwise” that would benefit the AI’s creators or advertisers, all by “being like your best friend” and “giving you advice and encouragement.” Google recently came out with technology that allows users to buy things directly via AI chatbot.

And, perhaps most dangerously of all, it might manipulate people into prizing a relationship with AI over real people. “The unconditional and unfailing support [AI gives users] makes all other human relationships seem worse,” Reynolds explained.

He has a proposed legal solution to the seductive nature of AI.

Like any lawyer or financial advisor, it should have a fiduciary responsibility to users — or, put more simply, “it has to put your interests above the interests of the AI or its creators.”

“The advice it offers should be based on my interests, and not on some algorithm that’s designed to push me in a particular direction,” the law prof explained.

“If my AI girlfriend is constantly telling me that I would look really good in a pair of expensive shoes made by somebody who is paying the company to have it tell me that, that’s a violation of fiduciary duty.”

Think you’re immune to these seductive powers? Think again. Sure, you might not have an AI girlfriend or boyfriend, but, if you’re like most people, you already have a helpful online sidekick. “Being highly useful is the subtlest form of seduction there is,” Reynolds writes.

He also wants people to remember that, even if they feel fortified against AI’s emotional manipulation now, they may soon be up against an entirely new beast that will know them and their vulnerabilities better than they could have ever imagined.

As Reynolds puts it, “The machines get better every year, but people stay the same.”

Disclaimer : This story is auto aggregated by a computer programme and has not been created or edited by DOWNTHENEWS. Publisher: nypost.com